Future-Proof, Not AI-Proof

Reflections from Assessment Horizons 2026 on feedback, authenticity and the questions we should be asking next

Reflections from Assessment Horizons 2026 on feedback, authenticity and the questions we should be asking next

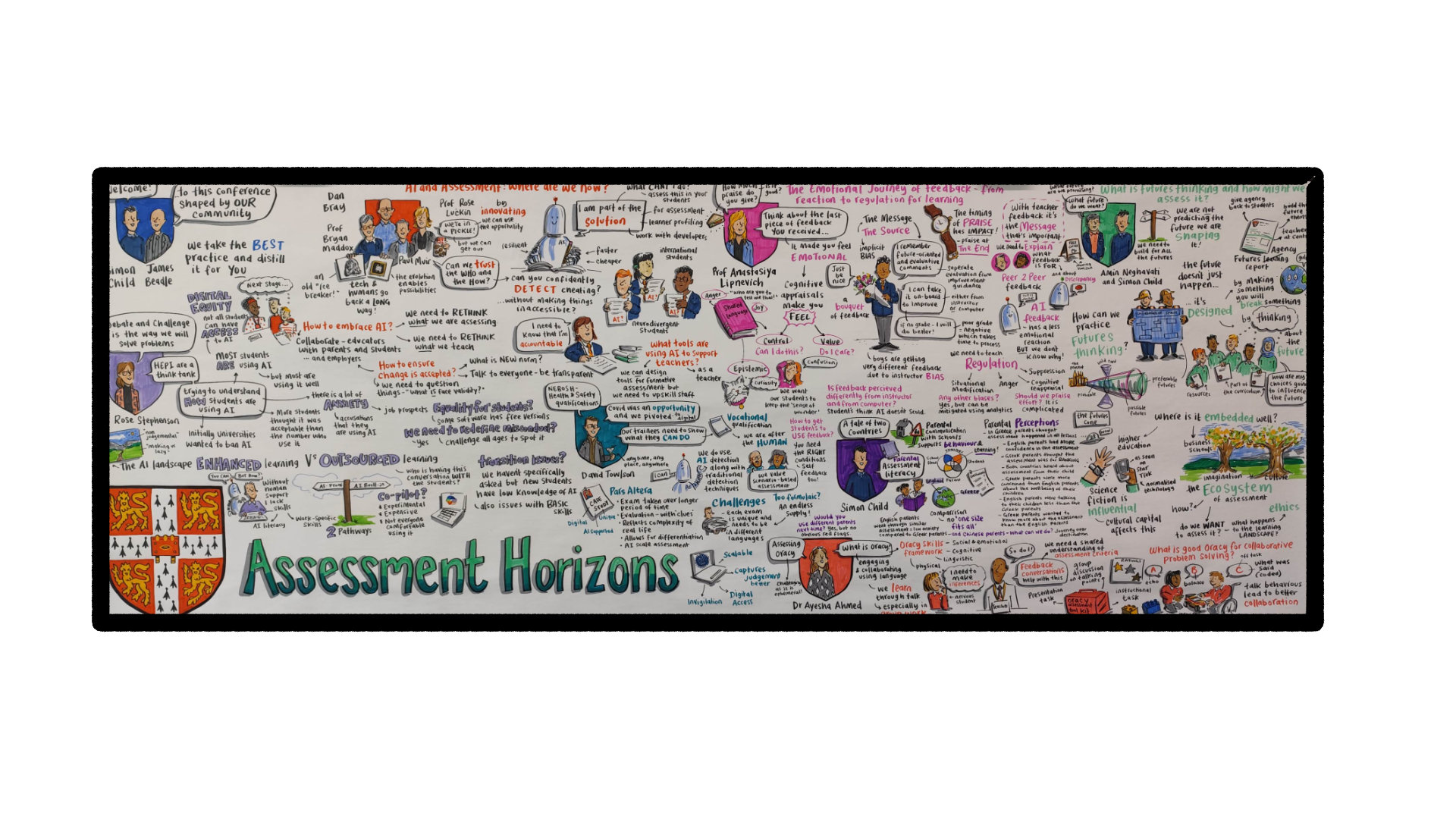

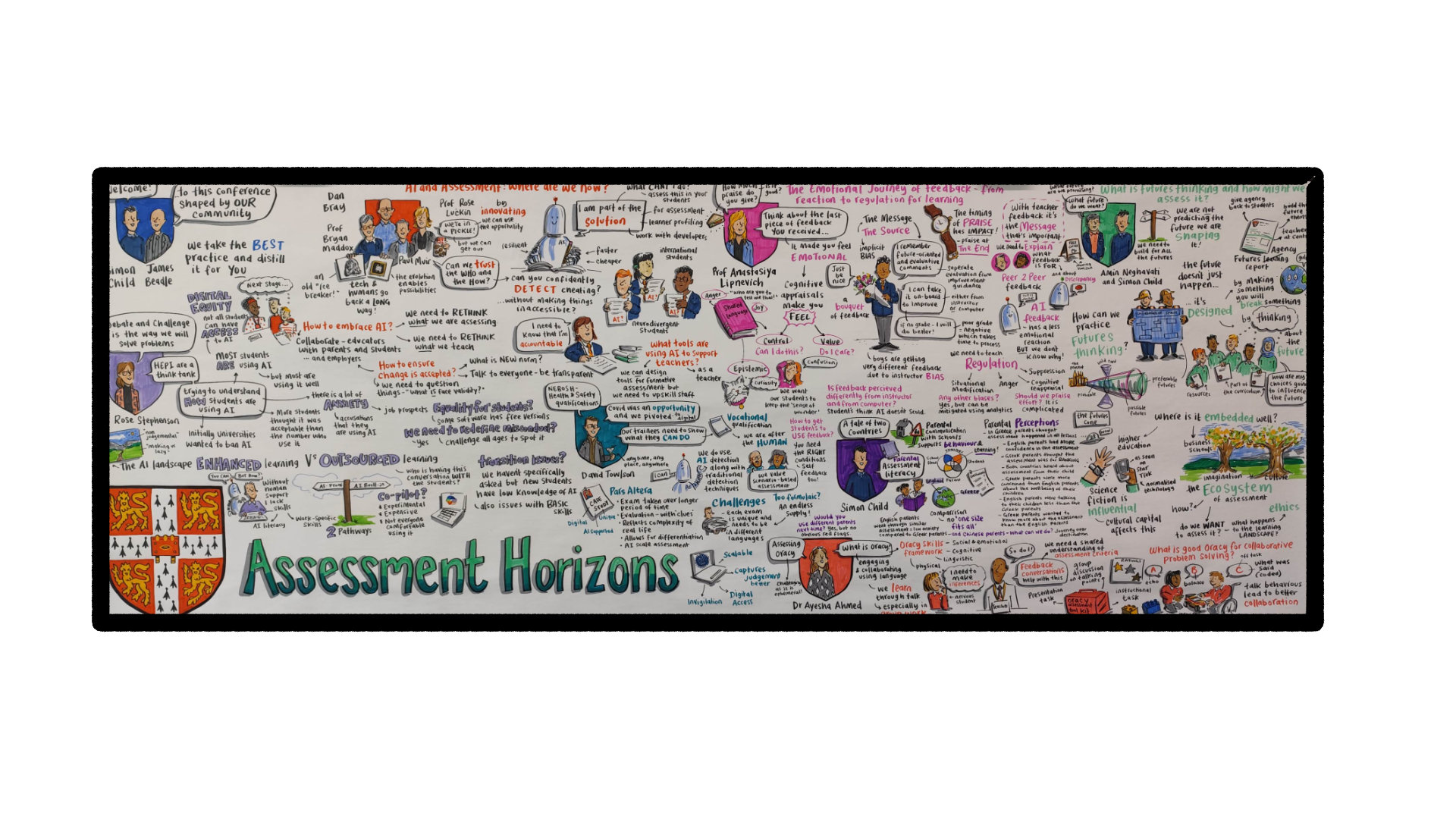

Assessment Horizons 2026, hosted by the Cambridge Assessment Network, brought together delegates from 18 countries to wrestle with a question that is becoming impossible to ignore: what does valid, meaningful assessment look like when AI is everywhere?

The conference ran as a hybrid event, with a dedicated online host and online-only sessions alongside the in-person programme in Cambridge, and whilst you cannot beat being in the room together, the Cambridge team pulled out all the stops to make the online experience genuinely participatory. Marianne Talbot, joining online, captured a theme that ran throughout the event: the importance of moving from prevention of AI misuse to resilience, and investing in intelligent security early in the test development process.

Prof Anastasiya Lipnevich's keynote on the emotional journey of feedback reframed something many of us thought we already understood. Her message was stark:

"If we as educators spent countless hours providing feedback and then if we put a grade on top of it there is a high chance that will not do anything with our feedback."

— Prof Anastasiya Lipnevich, Principal Measurement Scientist, National Board of Medical Examiners

The grade triggers an emotional response that crowds everything else out. What struck me was not a single finding but the overall picture. There is a time and a place for feedback, for praise, and for grades, but not always at the same time. Emotions have to be part of the design, not treated as noise.

"We need to separate evaluation from improvement guidance... this emotional nature of evaluation really takes away our attention from future oriented guidance that we are providing to our students."

— Prof Anastasiya Lipnevich

Lipnevich also presented evidence that students engaging with AI-generated feedback often process it more productively, noting that "emotions are significantly less intense in the AI condition." That is fascinating, but it needs a health warning. AI feedback that is sycophantic, that praises and validates everything regardless of quality, is arguably worse than no feedback at all. And uncalibrated AI, or a weaker model, can produce feedback that is simply inaccurate. The quality of the tool matters enormously.

Kirsty Parkinson from CIPS shared how her team responded to the disruption of generative AI across their assignment-based qualifications. Her opening premise set the tone for the entire session:

"My premise really today is to start with the idea that there is no such thing as an AI-proof assessment."

— Kirsty Parkinson, Head of Assessment Development, CIPS

What impressed me most was that they did not try to bolt detection or security onto the existing system. They went right back to the syllabus and rethought the model from the ground up, fundamentally looking at the design of the programme rather than tweaking around the edges. Kirsty was candid about the problems with detection, noting that markers did not trust the detection software, false positives were damaging relationships with candidates, and employer confidence was being eroded. Her message was clear: "It's a multifaceted approach, it's not just one thing." AI can support the process, but the learner's own thinking must remain visible.

This shift from prevention to design was echoed across the conference. As Nork Zakarian reflected on LinkedIn afterwards:

"AI detection tools are unreliable and prone to false positives, specifically disadvantaging non-native English speakers. And so we have to ask: is our assessment architecture designed to flex with AI, or are we building walls around a moving target?"

— Nork Zakarian, The Cambridge Access Validating Agency (CAVA)

Dr Rebecca Conway's session on assessing essential skills for work brought a different but equally urgent dimension. Drawing on CIPD research showing that only 28% of employers who had recently recruited a young person considered them prepared for the world of work, Conway explored the competencies that underpin workplace readiness and how we might actually assess them. The skills that matter most, communication, collaboration, critical thinking, problem-solving, are precisely the ones that traditional written assessments struggle to capture. Her session showcased approaches already being delivered effectively and at scale in colleges across the UK, including virtual simulations through Bodyswaps and WorldSkills UK competitions, offering practical models for how we might close that gap.

As Conway reflected on LinkedIn after the conference, Lipnevich's session drew on global case studies to articulate what works in delivering effective student feedback, while her own session explored different approaches to developing essential skills using examples already working at scale. That combination of research-grounded feedback design and practical skills assessment felt like the conference at its strongest.

This connects directly to the AI question. If a profession now requires practitioners to work with AI tools, then restricting their use during assessment undermines validity rather than protecting it. But if we assess a skill that involves using AI, we need to ensure that access is level for all learners. And this is where our sector needs to be much more careful in how we communicate AI use cases. Not all AI is the same. The gap between a free chatbot and a paid reasoning model, between Grok and Claude, between GPT-4o and Claude Opus, is enormous. A student using a free, non-reasoning model is having a fundamentally different experience from one using an advanced tool with extended thinking capabilities. If we are going to integrate AI into assessment, we owe it to learners to be honest about that variation.

It was encouraging to hear several speakers reference content shared by the Test Community Network during their presentations, a good sign that people are listening and that the broader community conversation is feeding into the academic and practitioner discourse.

The question I am left with is not whether AI belongs in assessment. It does. The question is whether we are brave enough to measure what matters most, even when it is harder to assess, rather than defaulting to what is easy to measure. Lipnevich's research reminds us there is no perfect model. It is about balancing purpose, timing, emotion, and the skills that give best value to both the learner and the employer.

As someone about to deliver a course on AI and automation, I know that for the vast majority of that programme, you simply cannot complete it without AI. I would not even attempt to lock it out unless it were a centre-delivered test. The future of assessment is not about building higher walls. It is about asking better questions.

Thank you to James Beadle, Dr Simon Child and Rosie Applin from the Cambridge University Press & Assessment team for another outstanding conference.