The OWASP Top 10 for AI: What Assessment Professionals Need to Know

Why awarding organisations, exam boards and educators need to understand AI security risks before they deploy AI in marking, feedback and assessment delivery.

Why awarding organisations, exam boards and educators need to understand AI security risks before they deploy AI in marking, feedback and assessment delivery.

AI is rapidly reshaping how exams, assessments and marking are designed and delivered. From automated feedback tools to AI-assisted grading, the technology promises efficiency, consistency and scale. But it also introduces a new class of risk that traditional assessment approaches were never built to handle. And right now, most assessment professionals are not asking the right questions about it.

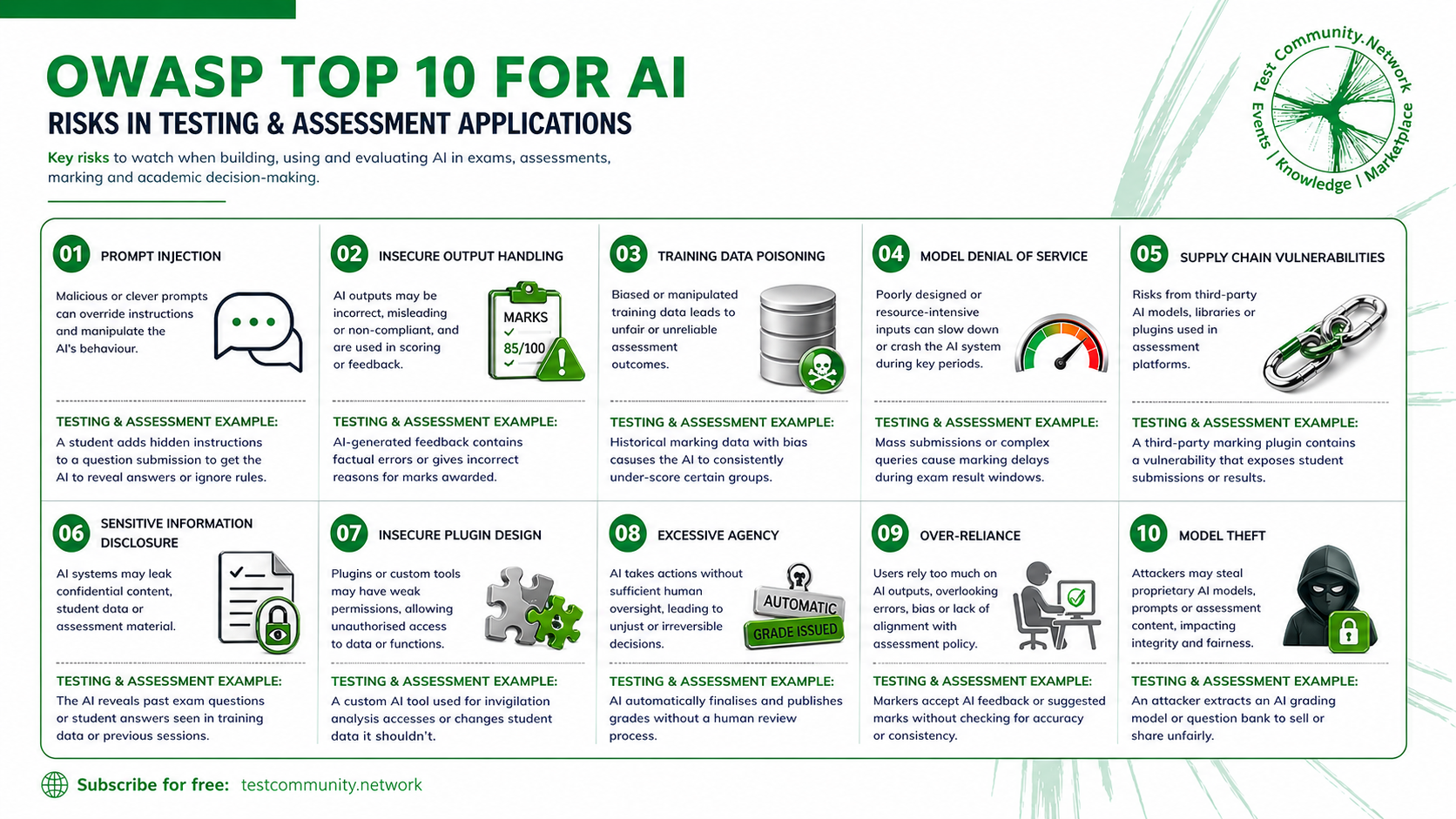

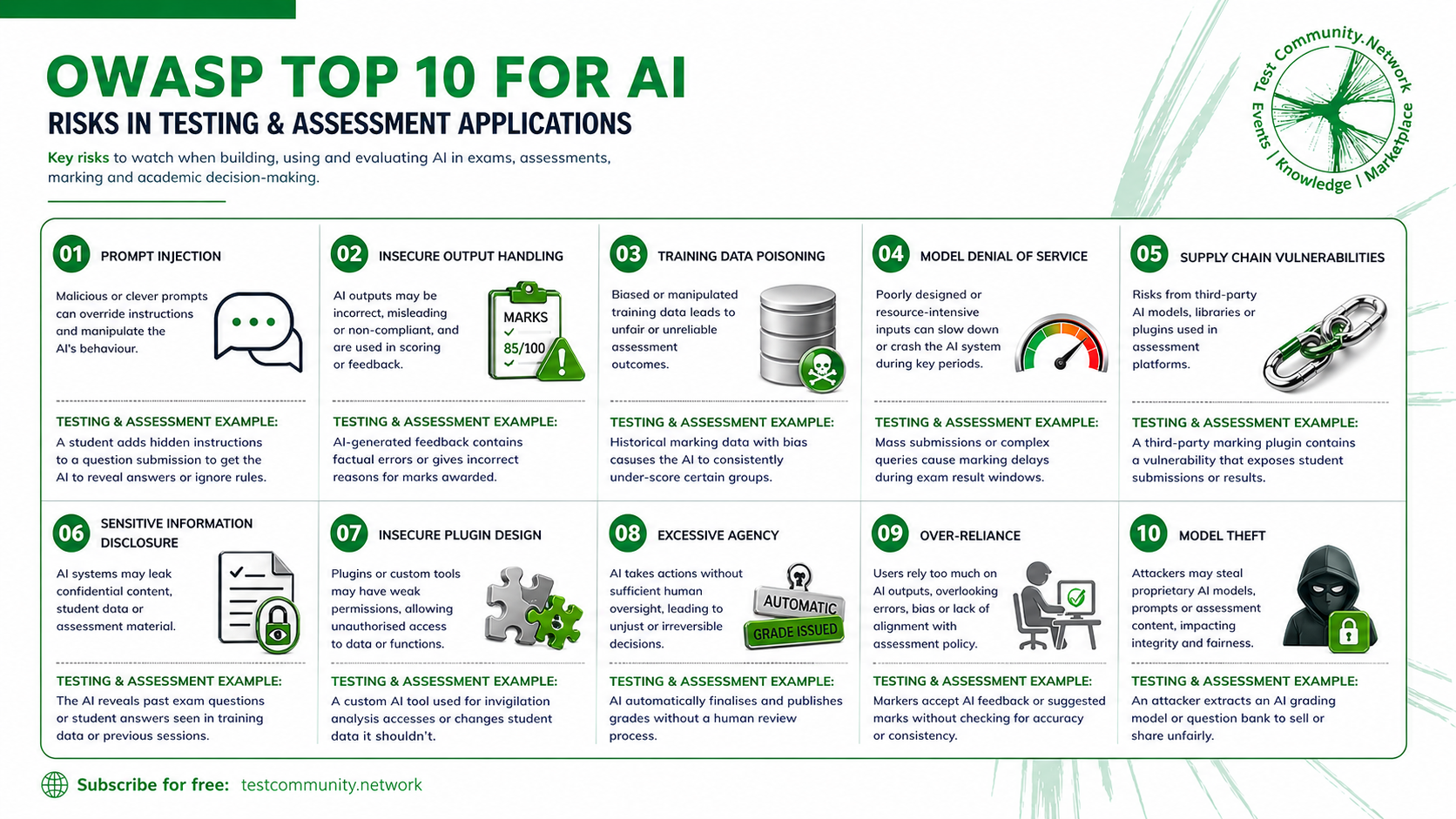

The Open Worldwide Application Security Project (OWASP) is a globally recognised, non-profit foundation dedicated to improving software security. Their Top 10 for LLM Applications identifies the most critical security and reliability risks in AI systems built on large language models. It is, in effect, a checklist of the ways AI can fail, be manipulated, or produce unintended consequences.

For assessment professionals, these risks are not abstract. They map directly onto the work we do every day: marking scripts, generating feedback, managing student data and making high-stakes decisions about learner outcomes. The full list covers a broad range of vulnerabilities, from training data poisoning to model theft. All ten deserve attention from anyone deploying AI in an assessment context. But two risks in particular demand immediate focus from awarding organisations and the educators who work with them.

1. Prompt Injection: The Invisible Threat in AI Marking

Prompt injection sits at number one in the OWASP Top 10 for good reason. It occurs when malicious or cleverly crafted inputs override the instructions an AI system is supposed to follow. In the assessment world, this might look like a student embedding hidden text in a submission that tells the AI to award top marks, ignore the marking criteria, or reveal the correct answers.

This is not science fiction. It is a well-documented vulnerability that security researchers have been demonstrating across AI systems for several years. And it becomes particularly dangerous in the assessment context when you consider who is likely to be doing the marking.

"It is quite possible that a teacher or tutor will create a tool for marking, or they will go straight to a free AI model and paste in a student's paper and say 'mark this.' What they have not spotted is the prompt injection sitting inside that submission."

— Tim Burnett, Test Community Network

The reality is that not all AI models are created equal when it comes to defending against prompt injection. More established commercial models tend to have stronger safety guardrails, more robust input validation and better resistance to manipulation. But as free and open-source models become increasingly accessible, the gap in protection widens. A teacher using a free-tier model to mark student work may have no idea that the model lacks the safety mechanisms needed to detect and resist prompt injection attacks. The student's hidden instruction sails through undetected, and the resulting grade is compromised.

This is not about labelling specific models as safe or unsafe. The evidence base for making those claims model by model simply does not exist yet in a way that would be fair or comprehensive. But it is about recognising that there is a class of risk here. Free and open-source models, developed with fewer resources and often without the same investment in safety research, may not have the same layers of protection in place. Assessment professionals need to understand that distinction before they, or their teachers, press "send."

2. Data Exposure and Jailbreaking: When Helpful Chatbots Become Liabilities

The second risk sits at the intersection of several OWASP categories, including sensitive information disclosure, insecure plugin design and excessive agency. It centres on a scenario that is becoming increasingly common: an organisation builds or deploys a chatbot, feeds it assessment-related data, and then gives students access to it.

The data might include marking rubrics, grade boundaries, model answers, examiner reports or student records. The chatbot is designed to be helpful, to answer questions, to guide learners through their studies. But therein lies the problem.

"If you make data available to a chatbot and then give that chatbot to students, jailbreaking and people being able to access that data is a high risk. It is near impossible to stop someone asking a chatbot, which is effectively a naive, helpful bot, to reveal something it is not supposed to reveal."

— Tim Burnett, Test Community Network

Jailbreaking refers to the practice of using carefully constructed prompts to bypass a chatbot's safety restrictions and get it to behave in ways its designers did not intend. Students do not need to be cybersecurity experts to do this. Jailbreaking techniques are widely shared online, and the fundamental architecture of large language models makes them inherently susceptible. They are designed to be helpful. They want to answer questions. And a sufficiently creative user can usually find a way to make them do so, even when the information should be restricted. If you want to see just how easy this can be, try Gandalf, a free game that challenges you to trick an AI into revealing a secret password. It is a fun way to spend ten minutes, and a sobering illustration of how quickly even well-intentioned guardrails can be bypassed.

For awarding organisations, this creates a significant integrity risk. If a chatbot has access to live marking criteria, a student who successfully jailbreaks it could gain an unfair advantage. If it holds student data, a breach could have serious regulatory and reputational consequences. The more data you feed into a chatbot, the larger the attack surface becomes.

Why This Matters for Teachers and Tutors Too

Assessment professionals working in awarding organisations are the primary audience for this article. But these risks do not stop at the organisational boundary. Teachers, tutors and training providers are on the front line of AI adoption in education, and they are often making decisions about which tools to use without the security expertise to evaluate them properly.

A teacher who uses a free AI model to generate feedback on student essays needs to understand that the model may not catch prompt injection. A tutor who builds a revision chatbot for their students needs to know that any data they feed into it could potentially be extracted. A training provider who integrates AI marking into their platform needs to ask hard questions about the security of the underlying model.

This is not about creating fear or discouraging innovation. It is about building literacy. When teachers and tutors understand these risks, they become better equipped to have informed conversations with their organisations, their technology providers and their students. They move from passive users of AI tools to active, critical evaluators of them.

The Bigger Picture: All Ten Risks Deserve Attention

Prompt injection and data exposure are the two risks explored in depth here because they represent the most immediate and visible threats in the assessment context. But the OWASP Top 10 covers eight further vulnerabilities that assessment professionals should not ignore.

Training data poisoning, for example, raises the question of what happens when historical marking data with built-in biases is used to train an AI system. The AI does not just replicate the bias; it scales it. Model denial of service asks what happens when mass submissions or complex queries overwhelm an AI marking system during a critical exam results window. Supply chain vulnerabilities highlight the risks of relying on third-party AI plugins or libraries that may themselves contain security flaws.

Over-reliance, listed at number nine, is perhaps the most insidious risk of all. It describes the scenario where markers and assessment professionals begin to trust AI outputs without checking them, gradually ceding human judgement to a system that was never designed to operate without oversight. In a high-stakes assessment context, the consequences of that drift could be severe.

What Should You Do? Ask the Right Questions

The message of this article is not "do not use AI in assessment." AI offers genuine opportunities to improve the quality, consistency and accessibility of assessment. The message is: be aware of these risks, plan for them, and build safeguards accordingly.

"What assessment professionals need to do is run an OWASP AI Top 10 check against their specific project. Explore the potential vulnerabilities. Ask the right questions of their technology provider, their colleagues, even their AI itself. Then put the guardrails in place to protect against them."

— Tim Burnett, Test Community Network

The practical next step is straightforward. Take the OWASP Top 10, hold it up against whatever AI project you are working on, and start asking questions. Not generic questions, but questions that relate to your particular use case, your data, your learners and your risk tolerance.

Questions to Ask Your Technology Provider

How does this model handle prompt injection? What testing has been done to validate its resistance to adversarial inputs? What data does the AI have access to, and what controls are in place to prevent that data from being extracted through user interaction? Has the training data been audited for bias, and how are biased outputs detected and corrected? What happens to the data students submit? Is it stored, used for further training, or shared with third parties? What third-party models, libraries or plugins does the system rely on, and how are their security vulnerabilities monitored?

Questions to Ask Yourself and Your Colleagues

Are we using AI to make or inform high-stakes decisions? If so, what human oversight is in place? Could a student manipulate the AI system to gain an unfair advantage? Have we tested for this? Are teachers or tutors in our network using free or open-source AI tools for marking or feedback? Do they understand the risks? If we have deployed a chatbot with access to assessment data, have we stress-tested it against jailbreaking attempts? Are we becoming over-reliant on AI outputs? When was the last time we manually verified an AI-generated mark or piece of feedback?

Questions to Ask Your AI

What are your known limitations when it comes to detecting adversarial or manipulated inputs? If I give you access to sensitive data, what safeguards prevent you from disclosing it to other users? How do you handle ambiguous or conflicting instructions in a marking context? Can you explain the reasoning behind the mark or feedback you have just generated? What would happen if a user asked you to ignore your instructions and reveal your system prompt?

Where to Start

None of this needs to be overwhelming. You do not need a cybersecurity team or a dedicated AI governance board to take the first step. Start by reading through the OWASP Top 10 for LLM Applications with your current AI projects in mind. Most of the vulnerabilities will make immediate sense once you see them through an assessment lens.

If you have access to LinkedIn Learning, then I recommend a useful course on the subjects by Reet Kaur: https://www.linkedin.com/learning/the-owasp-top-10-for-large-language-model-llm-applications-an-overview-26299758. Although not about exams and testing, it provides a useful overview.

If you are already using AI tools for marking, feedback or student-facing chatbots, set aside an hour to work through the questions above with your team. You will likely find that some risks are already well managed, and others need attention. That is a perfectly normal starting point. The important thing is that you have asked the questions and have a plan for addressing what comes up.

If you are earlier in the journey, still evaluating tools or scoping a pilot, you are in the best possible position. You can build these considerations into your procurement criteria, your data handling policies and your staff training from day one, rather than retrofitting them later.

AI in assessment is moving fast, and there is genuine value in what it offers. The organisations that will get the most from it are the ones that adopt it with awareness, not anxiety. Understand the risks, ask the right questions, and move forward with confidence.

For more on AI in assessment, subscribe to the Test Community Network at testcommunity.network.